Save and Restore Cache from GCS

Modern continuous integration systems execute pipelines inside ephemeral environments that are provisioned solely for pipeline execution and are not reused from prior pipeline runs. As builds often require downloading and installing many library and software dependencies, caching these dependencies for quick retrieval at runtime can save a significant amount of time.

In addition to loading dependencies faster, you can also use caching to share data across stages in your Harness CI pipelines. You need to use caching to share data across stages because each stage in a Harness CI pipeline has its own build infrastructure.

This topic explains how you can use the Save Cache to GCS and Restore Cache from GCS steps in your CI pipelines to save and retrieve cached data from Google Cloud Storage (GCS) buckets. For more information about caching in GCS, go to the Google Cloud documentation on caching. In your pipelines, you can also save and restore cached data from S3 or use Harness Cache Intelligence.

You can't share access credentials or other Text Secrets across stages.

This topic assumes you have created a pipeline and that you are familiar with the following:

Requirements

You need a dedicated GCS bucket for your Harness cache operations. Don't save files to the bucket manually. The Retrieve Cache operation fails if the bucket includes any files that don't have a Harness cache key.

You need a GCP connector that authenticates through a GCP service account key. To do this:

- In GCP, create an IAM service account. Note the email address generated for the IAM service account; you can use this to identify the service account when assigning roles.

- Assign the required GCS roles to the service account, as described in the GCP connector settings reference.

- Generate a JSON-formatted service account key.

- In the GCP connector's Details, select Specify credentials here, and then provide the service account key for authentication. For more information, refer to Store service account keys as Harness secrets in the GCP connector settings reference.

Add save and restore cache steps

- Visual

- YAML

- Go to the pipeline and stage where you want to add the Save Cache to GCS step.

- Select Add Step, select Add Step again, and then select Save Cache to GCS in the Step Library.

- Configure the Save Cache to GCS step settings.

- Select Apply changes to save the step.

- Go to the stage where you want to add the Restore Cache from GCS step.

- Select Add Step, select Add Step again, and then select Restore Cache from GCS in the Step Library.

- Configure the Restore Cache from GCS step settings. The bucket and key must correspond with the bucket and key settings in the Save Cache to GCS step.

- Select Apply changes to save the step, and then select Save to save the pipeline.

To add a Save Cache to GCS step in the YAML editor, add a type: SaveCacheGCS step, then define the Save Cache to GCS step settings. The following are required:

connectorRefThe GCP connector ID.bucket: The GCS cache bucket name.key: The GCS cache key to identify the cache.sourcePaths: Files and folders to cache. Specify each file or folder separately.archiveFormat: The archive format. The default format isTar.

Here is an example of the YAML for a Save Cache to GCS step.

- step:

type: SaveCacheGCS

name: Save Cache to GCS_1

identifier: SaveCachetoGCS_1

spec:

connectorRef: account.gcp

bucket: ci_cache

key: gcs-{{ checksum filePath1 }} # example cache key based on file checksum

sourcePaths:

- directory1 # example first directory to cache

- directory2 # example second directory to cache

archiveFormat: Tar

To add a Restore Cache from GCS step in the YAML editor, add a type: RestoreCacheGCS step, and then define the Restore Cache from GCS step settings. The following settings are required:

connectorRefThe GCP connector ID.bucket: The GCS cache bucket name. This must correspond with the Save Cache to GCSbucket.key: The GCS cache key to identify the cache. This must correspond with the Save Cache to GCSkey.archiveFormat: The archive format, corresponding with the Save Cache to GCSarchiveFormat.

Here is an example of the YAML for a Restore Cache from GCS step.

- step:

type: RestoreCacheGCS

name: Restore Cache From GCS_1

identifier: RestoreCacheFromGCS_1

spec:

connectorRef: account.gcp

bucket: ci_cache

key: gcs-{{ checksum filePath1 }} # example cache key based on file checksum

archiveFormat: Tar

Save Cache to GCS step settings

Save Cache to GCS step settings

Depending on the stage's build infrastructure, some settings may be unavailable or located under Optional Configuration in the visual pipeline editor. Settings specific to containers, such as Set Container Resources, are not applicable when using the step in a stage with VM or Harness Cloud build infrastructure.

Name

Enter a name summarizing the step's purpose. Harness automatically assigns an Id (Entity Identifier Reference) based on the Name. You can change the Id.

GCP Connector

The Harness connector for the GCP account where you want to save the cache. For more information, go to Google Cloud Platform (GCP) connector settings reference.

This step supports GCP connectors that use access key authentication. It does not support GCP connectors that inherit delegate credentials.

Bucket

The GCS destination bucket name.

Key

The key to identify the cache.

You can use the checksum macro to create a key based on a file's checksum, for example: myApp-{{ checksum filePath1 }}

With this macro, Harness checks if the key exists and compares the checksum. If the checksum matches, then Harness doesn't save the cache. If the checksum is different, then Harness saves the cache.

The backslash character isn't allowed as part of the checksum value here. This is a limitation of the Go language (golang) template. You must use a forward slash instead.

- Incorrect format:

cache-{{ checksum ".\src\common\myproj.csproj" } - Correct format:

cache-{{ checksum "./src/common/myproj.csproj" }}

Source Paths

A list of the files/folders to cache. Add each file/folder separately.

Archive Format

Select the archive format. The default archive format is Tar.

Override Cache

Select this option if you want to override the cache if a cache with a matching Key already exists.

By default, the Override Cache option is set to false (unselected).

Run as User

Specify the user ID to use to run all processes in the pod if running in containers. For more information, go to Set the security context for a pod.

Set Container Resources

Maximum resources limits for the resources used by the container at runtime:

- Limit Memory: Maximum memory that the container can use. You can express memory as a plain integer or as a fixed-point number with the suffixes

GorM. You can also use the power-of-two equivalents,GiorMi. Do not include spaces when entering a fixed value. The default is500Mi. - Limit CPU: The maximum number of cores that the container can use. CPU limits are measured in CPU units. Fractional requests are allowed. For example, you can specify one hundred millicpu as

0.1or100m. The default is400m. For more information, go to Resource units in Kubernetes.

Timeout

Set the timeout limit for the step. Once the timeout limit is reached, the step fails and pipeline execution continues. To set skip conditions or failure handling for steps, go to:

Stage setting: Shared paths

Pipeline steps within a stage share the same workspace. You can optionally share paths outside the workspace between steps in your stage by setting spec.sharedPaths.

stages:

- stage:

spec:

sharedPaths:

- /example/path # directory outside workspace to share between steps

Restore Cache from GCS step settings

Restore Cache from GCS step settings

Depending on the stage's build infrastructure, some settings may be unavailable or located under Optional Configuration in the visual pipeline editor. Settings specific to containers, such as Set Container Resources, are not applicable when using the step in a stage with VM or Harness Cloud build infrastructure.

Name

Enter a name summarizing the step's purpose. Harness automatically assigns an Id (Entity Identifier Reference) based on the Name. You can change the Id.

GCP Connector

The Harness connector for the GCP account where the cache is saved.

This step supports GCP connectors that use access key authentication. It does not support GCP connectors that inherit delegate credentials.

Bucket

The name of the GCS bucket containing the target cache.

Key

The key identifying the cache to restore.

You can use the checksum macro to restore a key based on a file's checksum, for example myApp-{{ checksum "path/to/file" }} or gcp-{{ checksum "package.json" }}. The result of the checksum macro is concatenated to the leading string.

The backslash character isn't allowed as part of the checksum value here. This is a limitation of the Go language (golang) template. You must use a forward slash instead, for example:

- Incorrect format:

cache-{{ checksum ".\src\common\myproj.csproj" }} - Correct format:

cache-{{ checksum "./src/common/myproj.csproj" }}

Archive Format

Select the archive format. The default archive format is Tar.

Fail if Key Doesn't Exist

Select this option to fail the step if the specified Key doesn't exist.

By default, this option is set to false (unselected).

Run as User

Specify the user ID to use to run all processes in the pod if running in containers. For more information, go to Set the security context for a pod.

Set Container Resources

Maximum resources limits for the resources used by the container at runtime:

- Limit Memory: Maximum memory that the container can use. You can express memory as a plain integer or as a fixed-point number with the suffixes

GorM. You can also use the power-of-two equivalents,GiorMi. Do not include spaces when entering a fixed value. The default is500Mi. - Limit CPU: The maximum number of cores that the container can use. CPU limits are measured in CPU units. Fractional requests are allowed. For example, you can specify one hundred millicpu as

0.1or100m. The default is400m. For more information, go to Resource units in Kubernetes.

Timeout

Set the timeout limit for the step. Once the timeout limit is reached, the step fails and pipeline execution continues. To set skip conditions or failure handling for steps, go to:

Language-specific requirements

The cache key and paths differ by language.

- Go

- Node.js

- Maven

Go pipelines must reference go.sum for spec.key in Save Cache to GCS and Restore Cache From GCS steps, for example:

spec:

key: cache-{{ checksum "go.sum" }}

spec.sourcePaths must include /go/pkg/mod and /root/.cache/go-build in the Save Cache to GCS step, for example:

spec:

sourcePaths:

- /go/pkg/mod

- /root/.cache/go-build

npm pipelines must reference package-lock.json for spec.key in Save Cache to GCS and Restore Cache From GCS steps, for example:

spec:

key: cache-{{ checksum "package-lock.json" }}

Yarn pipelines must reference yarn.lock for spec.key in Save Cache to GCS and Restore Cache From GCS steps, for example:

spec:

key: cache-{{ checksum "yarn.lock" }}

spec.sourcePaths must include node_modules in the Save Cache to GCS step, for example:

spec:

sourcePaths:

- node_modules

Maven pipelines must reference pom.xml for spec.key in Save Cache to GCS and Restore Cache From GCS steps, for example:

spec:

key: cache-{{ checksum "pom.xml" }}

spec.sourcePaths must include /root/.m2 in the Save Cache to GCS step, for example:

spec:

sourcePaths:

- /root/.m2

Placement of save and restore cache steps

The placement and sequence of the save and restore cache steps depends on how you're using caching in a pipeline.

- If you use caching to optimize a single stage, the Restore Cache from GCS step occurs before the Save Cache to GCS step.

- If you use caching to share data across stages, the Save Cache to GCS step occurs in the stage where you create the data you want to cache, and the Restore Cache from GCS step occurs in the stage where you want to load the previously-cached data.

The following YAML examples show save and restore cache steps used within the same stage and across two stages.

YAML example: Restore and save cache in the same stage

This YAML example includes one stage. At the beginning of the stage, the cache is restored so the cached data can be used for the build steps. At the end of the stage, if the cached files changed, updated file are saved to the cache bucket.

stages:

- stage:

name: Build

identifier: Build

type: CI

spec:

cloneCodebase: true

execution:

steps:

- step:

type: RestoreCacheGCS

name: Restore Cache From GCS_1

identifier: RestoreCacheFromGCS_1

spec:

connectorRef: account.gcp

bucket: ci_cache

key: gcp-{{ checksum "package.json" }}

archiveFormat: Tar

...

- step:

type: SaveCacheGCS

name: Save Cache to GCS_1

identifier: SaveCachetoGCS_1

spec:

connectorRef: account.gcp

bucket: ci_cache

key: gcp-{{ checksum "package.json" }}

sourcePaths:

- /harness/node_modules

archiveFormat: Tar

YAML example: Save and restore cache across stages

This YAML example includes two stages. The first stage creates a cache bucket and saves the cache to the bucket, and the second stage retrieves the previously-saved cache.

stages:

- stage:

identifier: GCS_Save_Cache

name: GCS Save Cache

type: CI

variables:

- name: GCP_Access_Key

type: String

value: <+input>

- name: GCP_Secret_Key

type: Secret

value: <+input>

spec:

sharedPaths:

- /.config

- /.gsutil

execution:

steps:

- step:

identifier: createBucket

name: create bucket

type: Run

spec:

connectorRef: <+input>

image: google/cloud-sdk:alpine

command: |+

echo $GCP_SECRET_KEY > secret.json

cat secret.json

gcloud auth -q activate-service-account --key-file=secret.json

gsutil rm -r gs://harness-gcs-cache-tar || true

gsutil mb -p ci-play gs://harness-gcs-cache-tar

privileged: false

- step:

identifier: saveCacheTar

name: Save Cache

type: SaveCacheGCS

spec:

connectorRef: <+input>

bucket: harness-gcs-cache-tar

key: cache-tar

sourcePaths:

- <+input>

archiveFormat: Tar

...

- stage:

identifier: gcs_restore_cache

name: GCS Restore Cache

type: CI

variables:

- name: GCP_Access_Key

type: String

value: <+input>

- name: GCP_Secret_Key

type: Secret

value: <+input>

spec:

sharedPaths:

- /.config

- /.gsutil

execution:

steps:

- step:

identifier: restoreCacheTar

name: Restore Cache

type: RestoreCacheGCS

spec:

connectorRef: <+input>

bucket: harness-gcs-cache-tar

key: cache-tar

archiveFormat: Tar

failIfKeyNotFound: true

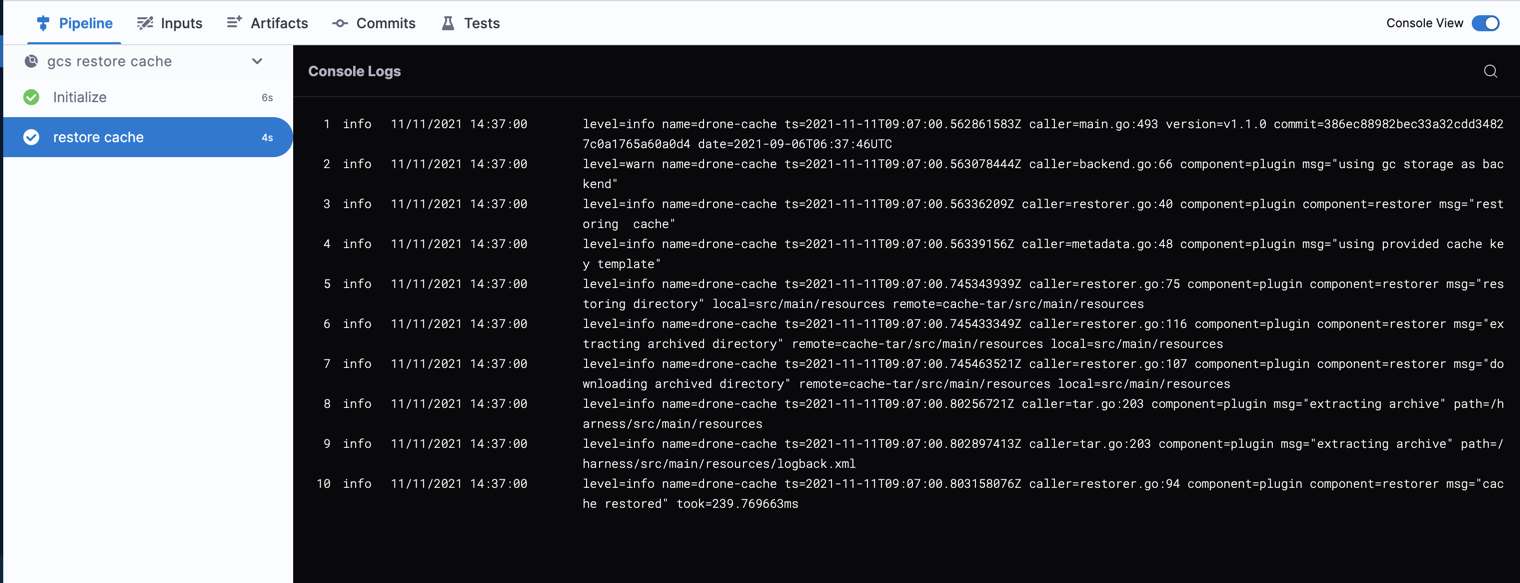

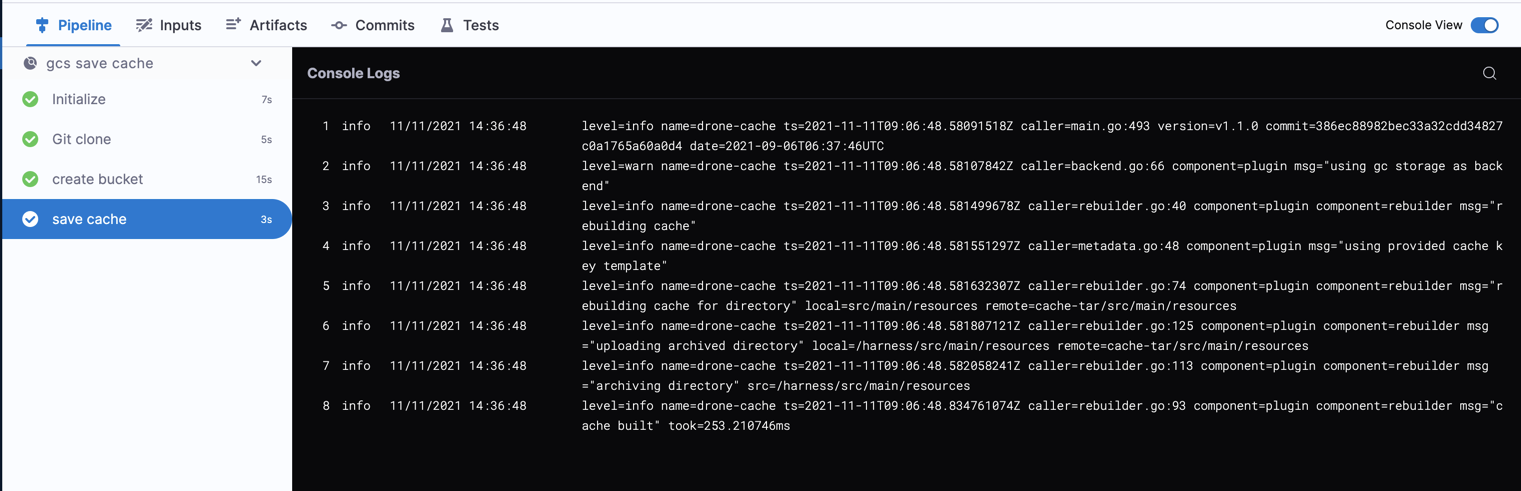

Cache step logs

You can observe and review build logs on the Build details page.

Example: Save Cache to GCS step logs

level=info name=drone-cache ts=2021-11-11T09:06:48.834761074Z caller=rebuilder.go:93 component=plugin component=rebuilder msg="cache built" took=253.210746ms

Example: Restore Cache from GCS step logs

level=info name=drone-cache ts=2021-11-11T09:07:00.803158076Z caller=restorer.go:94 component=plugin component=restorer msg="cache restored" took=239.769663ms